Thanks to a wide variety of tools, Python allows performing all the necessary tasks: whether it is parsing dynamic data, setting up a proxy, or working with a simple HTTP request. Python has a various libraries and framework that allow to make HTTP request, parse data, set-up proxies, multi-threading requests and store and process the scraped data.ĩ libraries and tools useful in web scraping: Python is the most commonly used programming language for web scraping. personal data, intellectual property, etc.) What is the Best Programming Language for Web Scraping? There is however some kind of data that is protected by international regulations (e.g.

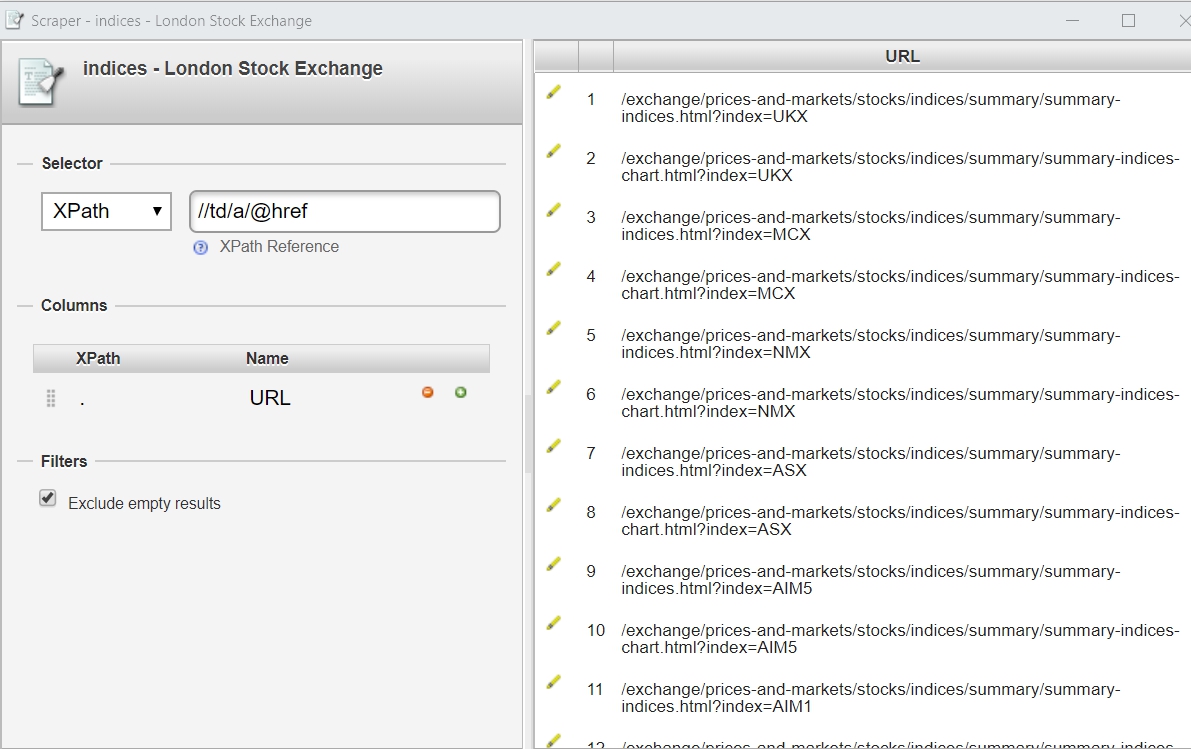

In the United-States, web scraping is legal as long as you scrape data publicly available on the internet. Self-built web scrapers allow you to scrape content and reuse it within your code infrastructure in a way that an external web crawler can’t. For example, a chrome extension may be better to scrape any pages while you browse. Web Scrapersīuilding your own web scraper, or using a browser based scraper, allows you to quickly fetch content of a web page on demand, without the hurdle of downloading, opening and running an application like a web crawler. They are also much more expensive.īuilding your own web crawler using web scraping technique can become very complex, very fast. They also take care of most of the challenges that come up in web scraping. While most web scrapers are built to scrape a list of pages that you give it, web crawlers a very complex structure that recursively “follows” links found on pages crawled. They are much more powerful than homemade web scrapers. Web crawlers such as Screaming Frog are essentially web scrapers. For smaller projects, web scraping is very useful. If you don’t know whether you should use a web scraper or a web crawler, ask yourself this question: “do I need to discover and extract many pages from a website?”. See how to scrape and prevent your IP from being blocked. These features allow uninterrupted fast scraping and minimize the risk of personal IP addresses being blocked. The cloud-based scrapers use the server’s IP and server capacity to crawl the web. The scraper software use the computer IP address and are limited to the speed capacity of the computer they are on.Ĭloud-based scrapers are softwares hosted on web servers that provide an interface and the server resources to scrape the web. Scraper softwares often named web crawlers as they provide recursive web scraping features. Scraper softwares are softwares installed on your computer that provide a user-interface to scrape the web. An example of a web scraping browser extension is Scraper Chrome Extension. They are usually free and require little prior-knowledge of web scraping. Scraper extensions are the simplest web scraping tools. Web scraping browser extensions are extensions added to a web browser that allow the user to scrape web pages as they navigate in real-time. They require knowledge in computer programming and are limited to the programmer’s skills. Pre-built and Self-built web scrapers are scrapers created and executed through a programming language such as Python or JavaScript. Cloud-based scrapers and web crawlers (e.g.Scraper Softwares and web crawlers (e.g.Pre-built and Self-built Scrapers (e.g.Scrapy or Screaming Frog) Categories of Web Scrapers

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed